Google is expanding hands-free and eyes-free interfaces on Android.

As part of 2024's Accessibility Awareness Day, Google is showcasing some updates to Android that should be helpful to people with mobility or vision impairments.

As part of 2024's Accessibility Awareness Day, Google is showcasing some updates to Android that should be helpful to people with mobility or vision impairments.

Project Gameface allowed gamers to use their faces to move the cursor and perform common click-like actions on desktop, and now it's coming to Android.

The project lets people who are low-mobility users utilize facial movements, such as raising an eyebrow, moving one's mouth or their head, to activate various functions. There's basic stuff like a virtual cursor, but also gestures where, for instance, you can define the beginning and end of a swipe by opening your mouth, moving your head, then closing your mouth.

It's customisable to a person's ability, and Google researchers are working with Incluzza in India to test and improve the tool. Certainly for many people, the ability to simply and easily play many of the thousands of games (well, millions probably, but thousands of good ones) on Android will be more than welcome.

There's a nice video here showing the product in action and being customized; Jeeja there in the preview image talks about how much she needs to move her head to activate the gesture.

That kind of fine-tuned adjustment is as important as someone being able to set the sensitivity of your mouse or trackpad.

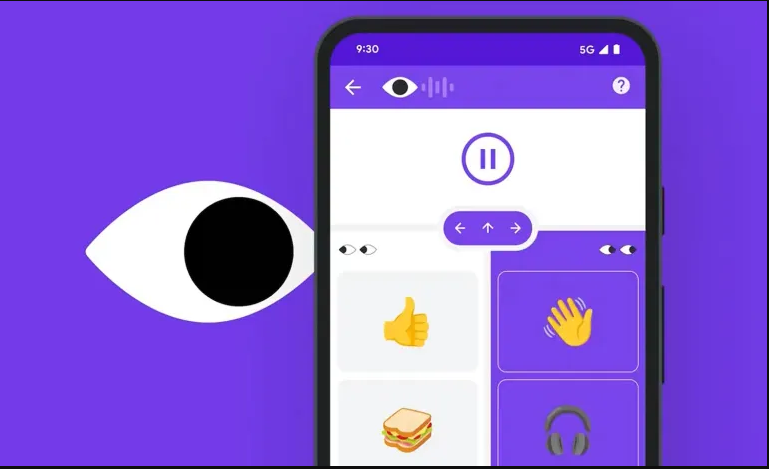

Another feature for people who can't easily tap at a keyboard, on screen or physical: a new non-text "look to speak" mode that lets users select and send emojis either on their own or as stand-ins for a word or action.

You could even include your own photos so that someone might have common phrases and emojis on speed dial and also images of frequently used contacts tagged to photos of them-all accessible with a few glances.

There are a range of tools out there for people who are blind or have low vision, of varying efficacy no doubt, to identify the things that the phone's camera is seeing. All in all, any of the use cases has numerous possibilities: sometimes it's better to begin with something simple like finding an empty chair, or recognizing the person's keychain and pointing it out.

Users will be able to add custom object or location recognition so that the instant description function will give them what they need and not just a list of generic objects like "a mug and a plate on a table." Which mug?!

Apple also demonstrated some accessibility features yesterday, and Microsoft has a few as well. Take a minute to browse over these projects, which rarely get main-stage attention (though Gameface did) but are of major importance to those for whom they are designed.